At the recent Autonomy Investor Day, Tesla highlighted the progress it’s been making on its Autopilot system, and discussed its vision for the future of self-driving. Elon Musk and several other company execs, including Director Peter Bannon, Senior Director of Artificial Intelligence Andrej Karpathy, and VP of Engineering Stuart Bowers, made presentations for an audience of key investors, some of whom also got to test-drive (or test-ride) a prototype of the latest tech.

Visually-oriented folks can watch the entire presentation, which runs nearly four hours, or a short summary provided by Tech Insider.

Tesla has not yet delivered the promised coast-to-coast driverless drive, but it did release a video showing a Model 3 driving on a mix of highways and local roads around Tesla’s Palo Alto headquarters, with no human hands on the wheel.

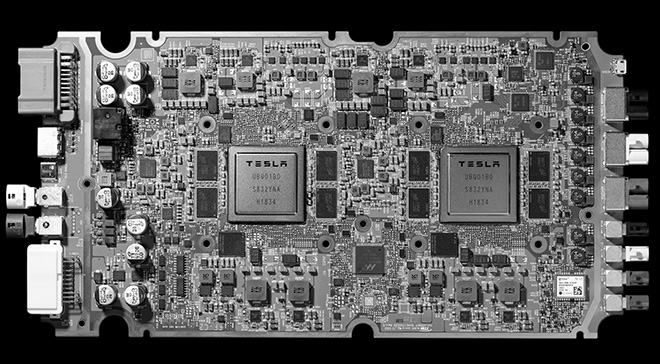

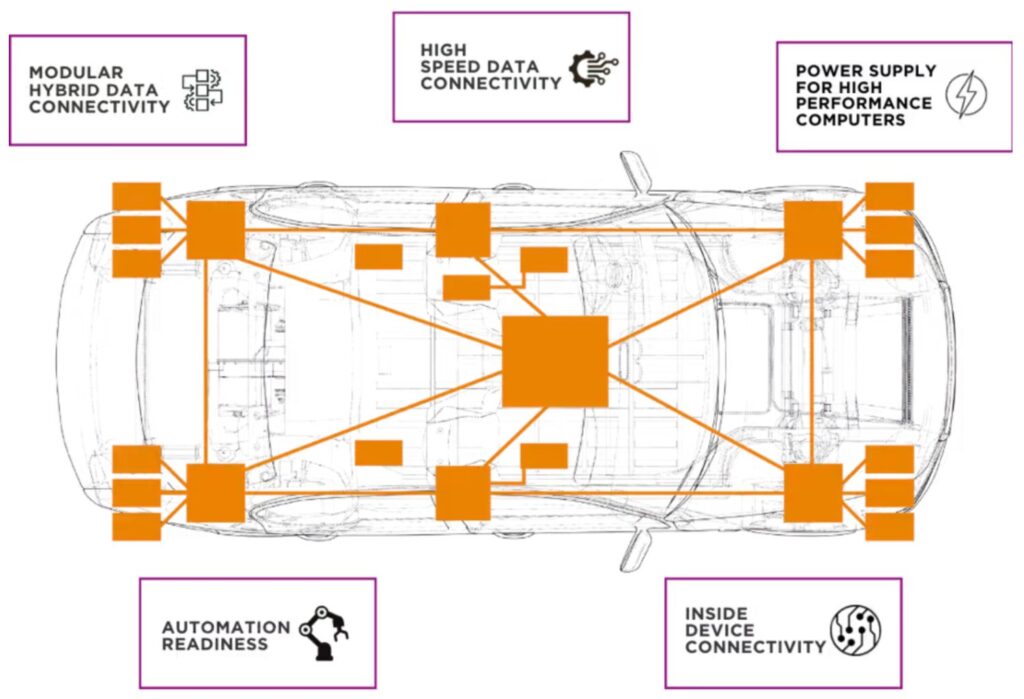

Much of the day’s news had to do with the Tesla Full Self-Driving (FSD) computer (formerly known as the Autopilot 3.0 upgrade), the brain of the new system, which is now included in all Teslas being produced. Tesla designed the FSD computer solely for self-driving tasks, and limited power consumption to 100 watts to avoid impacting the vehicle’s range. It includes two totally redundant systems on a single circuit board – if any aspect of the system fails, Musk says, the car will keep driving.

Noting that automaker Tesla has never before designed its own chip, Musk claims that the FSD is “the best chip in the world…by a huge margin.”

Competitor NVIDIA quickly disputed that claim. “NVIDIA is the standard Musk compares Tesla to – we’re the only other company framing this problem in terms of trillions of operations per second, or TOPS,” wrote NVIDIA VP of Autonomous Machines Rob Csongor in a blog post. “But while we agree with him on the big picture – that this is a challenge that can only be tackled with supercomputer-class systems – there are a few inaccuracies in Tesla’s Autonomy Day presentation.”

“Tesla’s two-chip FSD computer at 144 TOPs would compare against the NVIDIA DRIVE AGX Pegasus computer which runs at 320 TOPS for AI perception, localization and path planning,” Csongor writes. “Additionally, while [NVIDIA’s] Xavier delivers 30 TOPS of processing, Tesla erroneously stated that it delivers 21 TOPS.”

Noting that self-driving will require “massive amounts of computing performance,” Csongor concedes that Tesla has raised the bar. “Every other automaker will need to deliver this level of performance. There are only two places where you can get that AI computing horsepower: NVIDIA and Tesla. And only one of these is an open platform that’s available for the industry to build on.”

Tesla’s latest Autopilot hardware suite includes 8 cameras, 12 ultrasonic sensors, radar, GPS, an inertial measurement unit, and sensors that measure the angle of the steering wheel and accelerator pedal.

One feature it does not have is lidar, a technology that uses pulsed laser light to measure distance to a target, and that is favored by most other companies that are working on autonomous driving. In his presentation, the ever-iconoclastic Musk dismissed lidar in terms reminiscent of those he has used to describe hydrogen fuel cells. Lidar is “a fool’s errand,” he said. It’s “expensive” and “unnecessary,” and “anyone relying on lidar is doomed.”

Some autonomy experts agree with Musk. Gizmodo reports that Cornell researchers argue in an upcoming paper that a pair of cheap cameras mounted behind a vehicle’s windshield can produce stereoscopic images that can be converted to 3D data almost as precise as that generated by lidar, at a fraction of the cost.

Above all, Tesla is relying on the vast neural network of real-world driving information recorded by the thousands of Autopilot-equipped Tesla vehicles on the road. Using various AI techniques, Tesla is teaching its system to recognize and react to the vast variety of situations that might be encountered in the wild.

Sources: EVannex, Forbes, NVIDIA, Tesla, Tech Insider